How KPM-1 works

Kronaxis Persona Model 1 (KPM-1) is a synthetic-panel system for UK political prediction. It draws representative voter panels from a 65,000-persona simulated UK electorate, asks each persona two questions, and aggregates the result through a calibrated nine-layer pipeline. We pre-register because more light on the predictions is more useful than less. The May 7 2026 council elections are the first public election test of the methodology.

Predictions are public. Hash is public. Model is private.

The SHA-256 hash of the predictions JSON was committed to a public GitHub repository before any ballot opened. Anyone can verify on 8 May that the numbers were not adjusted after results came in. KPM-1 itself, the 65,000-persona UK dataset, and the calibration pipeline are proprietary to Kronaxis Limited. Commercial enquiries: jason@kronaxis.co.uk.

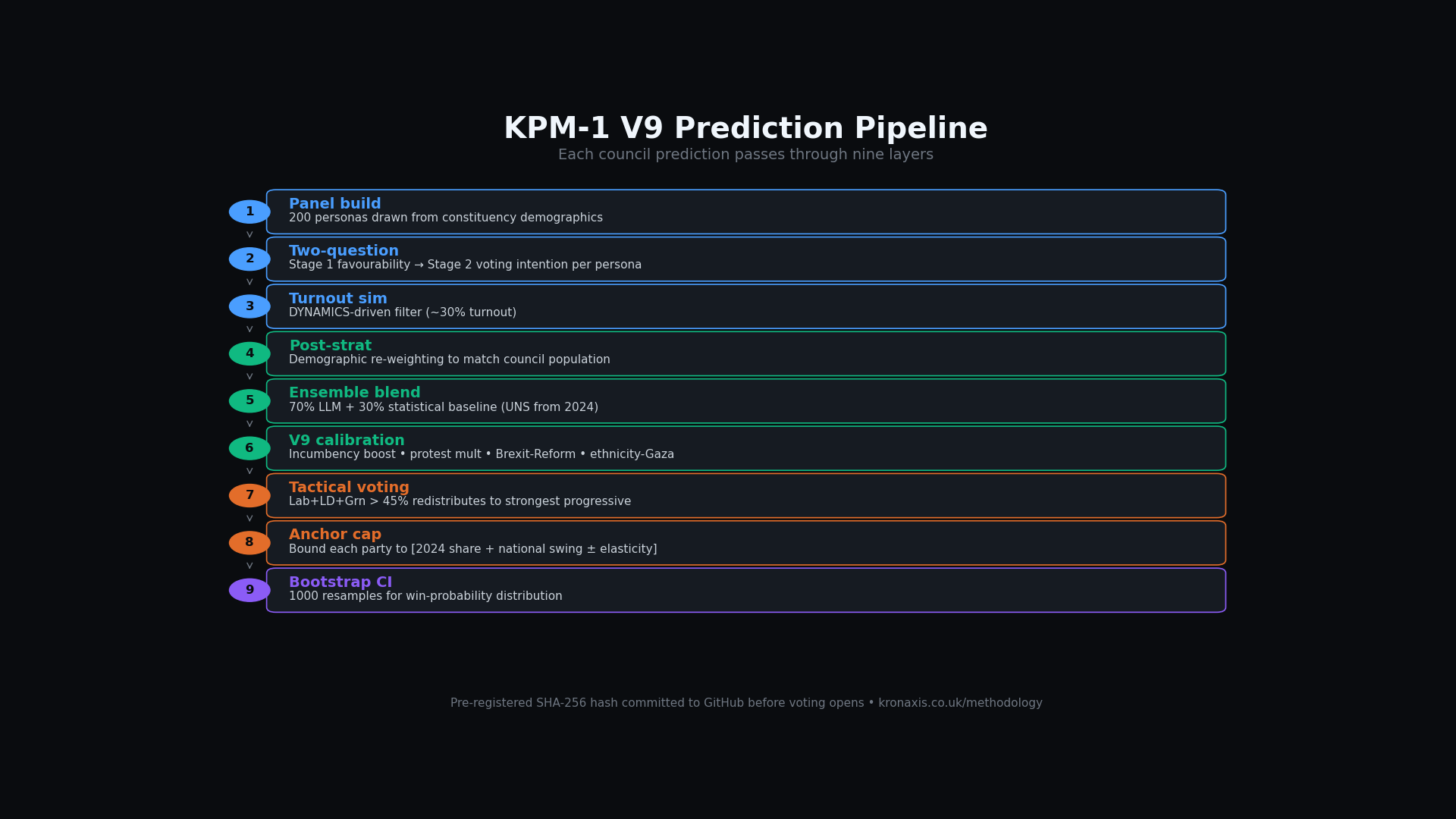

The V9 prediction pipeline

Each council prediction passes through nine layers. The first three build a representative panel from the underlying persona corpus and ask each one how they intend to vote. Layers 4 to 6 normalise and calibrate the raw output. Layers 7 to 9 enforce structural plausibility against 2024 General Election results and produce the keystone confidence bounds.

Why the calibration is necessary

Raw LLM output for political prediction has known systematic biases: over-prediction of Reform UK in strong-Leave seats, under-prediction of Labour in diverse metro boroughs, and a general tendency to amplify the latest national-narrative signal. The V9 calibration architecture applies four working tools in parallel — incumbency boost, protest multiplier, Brexit-Reform correlation, and ethnicity-Gaza scaling — with the relational anchor cap as the final step. Together they are what makes the raw output credible at the council level.

The relational anchor cap, in plain English

For every party in every council, we compute an "anchor" share: the party's 2024 share in that specific council, plus the 2024-to-2026 national swing for that party. The model's projected share is then squared against this anchor and bounded to anchor ± 10 percentage points (or ± 5 percentage points for parties with strong local prev share above 40%). This is uniform-national-swing with local elasticity — a standard psephological correction that prevents the LLM-induced "hallucinated swing" pathology, where the model decides Reform is on 35 % in Newham because the headlines say so.

Public vs proprietary

Kronaxis publishes the predictions and the pre-registration hash so anyone can verify on 8 May that the numbers were not tweaked after results came in. The model itself, the 65,000-persona UK dataset, the calibration logic, and the political LoRA adapter are proprietary to Kronaxis Limited and not available for reuse.

| Item | Status |

|---|---|

| Predictions JSON (per-council vote shares) | Public — github.com/Kronaxis/kpm1-election-projections |

| Pre-registration hash (SHA-256) | Public — same repo, committed before voting opened |

| Methodology paper (high-level) | Public — see /research |

| 103-benchmark validation results | Public — paper appendix |

| KPM-1 model weights / political LoRA adapter | Proprietary — Kronaxis Limited |

| 65,000-persona UK dataset | Proprietary — Kronaxis Limited |

| Calibration pipeline source code | Proprietary — Kronaxis Limited |

| Per-persona reasoning traces | Proprietary — available under commercial licence |

The published predictions are the verifiable, falsifiable output. The underlying machinery is the company's product — available to commercial partners under licence. Contact for commercial enquiries.

How predictions are validated

103 cross-domain benchmarks. Each is a published opinion-survey result from BSA (British Social Attitudes), ESS (European Social Survey), BES (British Election Study), NRS (National Readership Survey), Eurobarometer, NHS Confederation, Migration Observatory, and ONS.

For each benchmark, the panel is asked the same question and the result compared to the published real-world data. Aggregate accuracy across all 103: average gap 1.4 percentage points, max 4.1pp, min 0.0pp. Specific accuracy on the 17 voting-intention benchmarks: average gap 0.8pp, max 2.2pp.

Important caveat: cross-domain consistency is necessary but not sufficient for forecasting accuracy. The 103-benchmark work shows that the panel reproduces opinion-distribution data well; it does not automatically prove that aggregating individual-persona vote intentions reproduces a real council-level result. 7 May 2026 is the first time that question is put to a falsifiable test.

Known biases (we publish these)

In v5 (1 May 2026):

1. Lab under-prediction in diverse metro boroughs

The LLM persona simulation under-weights Labour share in councils with 30%+ Asian populations. Affects Birmingham, Bradford, Brent, Ealing, and others. v5 applies the LAB_GE_PREV_OVERRIDE table to lift the anchor floor for major Lab metros. Both pre- and post-override numbers are documented in the methodology JSON.

2. Reform over-prediction in strong-Leave seats

The brexit-Reform correlation correction can over-fire in seats with Leave > 65%. The relational anchor cap mitigates but does not eliminate. Specific councils flagged in our results JSON.

3. Lib Dem strongholds with patchy 2024 local data

About 10–15 councils where Lib Dem 2024 LOCAL council vote share was unavailable. We use national-share proxy fallback (national share / 2). May understate true local LD strength.

4. High between-run variance for genuine toss-ups

At temperature 0.35, some genuinely-marginal councils flip winner between runs. v5 mitigation: tighter elasticity (±5pp) for parties with strong local prev. KPM-2 will move to multi-seed averaging.

5. Statistical baseline source mismatch on edge councils

For a small number of councils, the baseline uses county-wide rural data instead of council-specific. Identified in 1–2 results. Documented in methodology JSON.

Citation

If you use KPM-1 outputs in academic or journalistic work, please cite:

Critical feedback particularly welcome

This is the first public election test of the methodology. We will publish the post-mortem on 8 May whether the predictions verify or miss. If you spot a methodological problem before then, drop a line to jason@kronaxis.co.uk — the more critical the better.